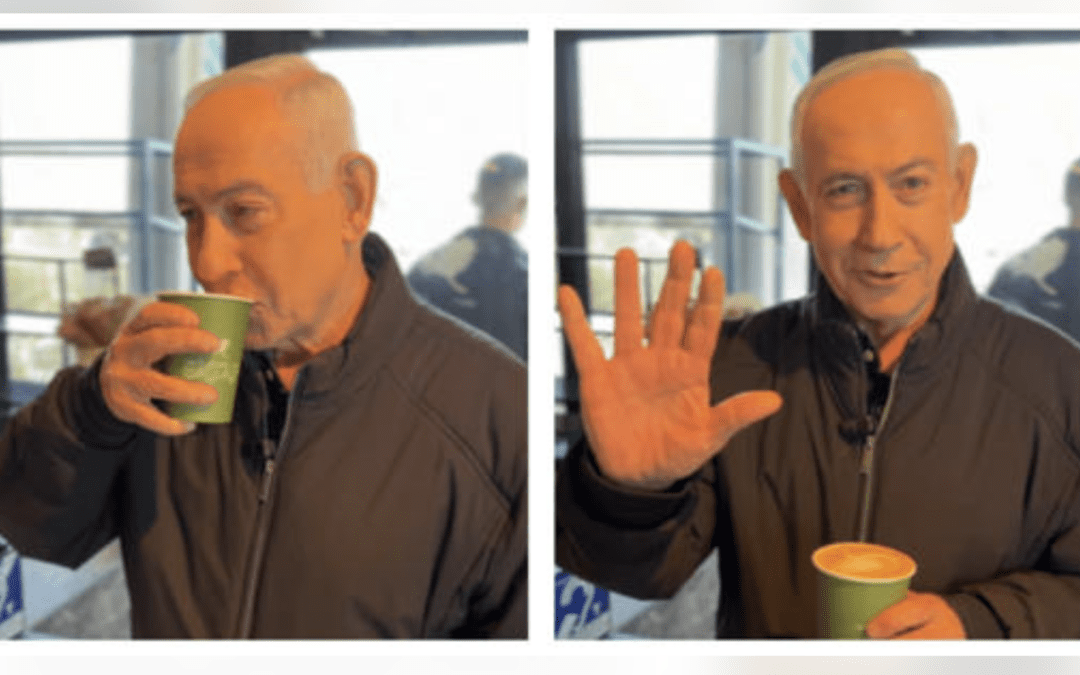

A video of Benjamin Netanyahu sitting in a coffee shop recently circulated online.

It appeared to serve a simple purpose: to show that he was alive and well. But instead of putting speculation to rest, it sparked even more questions.

People zoomed in on his hands. They questioned the coffee, the lighting, the movement, and the details in the frame. Some argued the video was AI-generated. Others insisted it was real.

But the most important takeaway was not whether the video was fake.

It was this: Even when we are looking directly at something, we are no longer entirely sure what we are seeing.

And that is not just a content problem. It is a trust problem.

The Problem Isn’t Just AI. It’s the Environment.

We are no longer living in a world where content falls neatly into obvious categories.

What you see online today could be real, unedited footage, edited or manipulated video, or fully AI-generated synthetic media. Increasingly, those categories are difficult for the average viewer to distinguish, especially when content is short, compressed, reposted, or stripped of context.

A shadow may look strange. A hand may appear distorted. An object may seem to move unnaturally. But those same oddities can also be caused by ordinary things like compression, lighting, camera angles, or platform distortion.

In other words, many of the “clues” people rely on to identify AI can also appear in real footage. That is where confusion begins.

What Counts as Evidence Anymore? A Legal Perspective

From a legal standpoint, evidence has never been about appearances alone.

In court, a video is not accepted simply because it exists. It must be authenticated, meaning it must be shown to be what it claims to be. It must be reliable, meaning it has not been materially altered or distorted. And it must be relevant to the issue at hand.

This is why courts often require testimony about where evidence came from, metadata or chain of custody, and corroborating sources.

The legal system understands something the internet often forgets: Seeing something is not the same as proving something. And as AI continues to evolve, that distinction becomes even more important.

The AI Reality Check Framework

Instead of trying to “spot AI” based on instinct alone, we need a more disciplined way to evaluate what we are seeing.

Here is a practical framework:

1. Source

Where did this content originate?

Was it posted by an official account, a verified outlet, or an anonymous repost page? If you cannot identify the source, that should immediately slow you down.

2. Context

What might be missing?

Is this the full clip? Has it been cut down, reposted, or stripped of surrounding context? What happened before and after the moment you are seeing? Context often changes interpretation more than the image itself.

3. Consistency

Do the details behave naturally?

Do movements look fluid? Do objects behave as expected? Are there visual anomalies? An anomaly is not proof of manipulation, but it is a reason to investigate further.

4. Corroboration

Is there independent confirmation?

Are other credible outlets reporting the same event? Are there additional angles or recordings? Has anyone verified the location, timing, or circumstances? Corroboration remains one of the strongest indicators of reliability.

What People Get Wrong

In today’s environment, people are often more alert, but not always more accurate.

A common mistake is assuming that if something looks weird, it must be fake. Not necessarily. Real footage can look strange for completely ordinary reasons. Another mistake is assuming that if something looks normal, it must be real. Also not true. AI-generated content can now appear highly realistic.

And of course, virality does not equal truth. A video spreading quickly may reflect attention, outrage, or confusion. It does not tell you whether the content is authentic.

The Bigger Shift

What we are experiencing is not just an increase in AI-generated content. It is a deeper shift in how reality is interpreted.

We are moving from a world where “seeing is believing” to one where seeing requires interpretation. And without the tools to interpret what we see, people are left to rely on instinct, emotion, and assumption. That is a dangerous place to be in an information environment shaped by speed, virality, and manipulation.

From Confusion to Discernment

The goal is not to believe everything you see. And it is not to doubt everything either. The goal is to develop discernment.

Everyone should make it a habit to ask: Where did this come from? What am I actually looking at? What evidence supports this?

Because in a world where proof is becoming harder to define, your ability to think clearly becomes one of your greatest protections.

For a deeper breakdown of how to evaluate moments like this, watch my video, “We Can’t Tell What’s Real Anymore… Netanyahu Video Shows Why.

Technology may have been miseducated. But you do not have to be.